Understanding Game Theory: How Strategic Thinking Shapes Our World

Have you ever wondered why nations hesitate to reduce their nuclear arsenals, even when peace is clearly beneficial? Or why two businesses sometimes continue harmful competition instead of cooperating for mutual gain? The answers lie in Game Theory, a fascinating area of study that reveals hidden logic behind decision-making, from global politics to everyday interactions.

At its core, Game Theory is the science of strategy - it explores how individuals, businesses, or even entire nations behave in situations where their decisions affect one another. Simply put, it helps us understand why rational people might sometimes do seemingly irrational things, purely because they anticipate how others might respond.

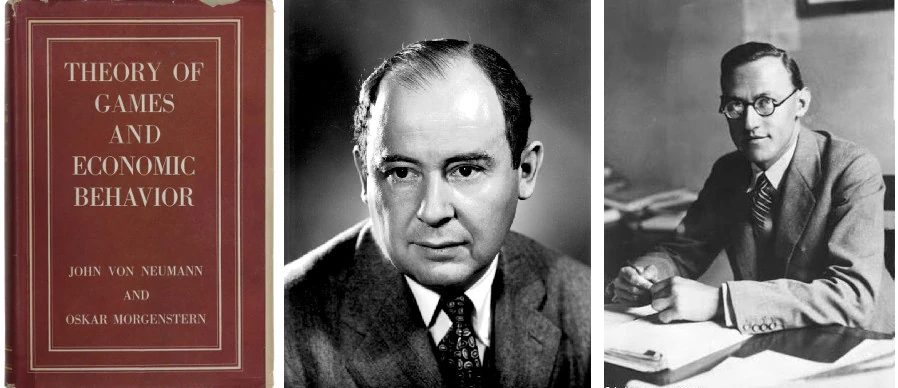

The Birth of Game Theory

Game theory's formal beginning is often attributed to mathematician John von Neumann and economist Oskar Morgenstern, who published their book Theory of Games and Economic Behavior in 1944. Initially developed as a mathematical framework, game theory was first applied to economics but quickly spread to political science, psychology, biology, and computer science.

John Nash later revolutionized the field in the 1950s with his concept of Nash Equilibrium, work that eventually earned him the Nobel Prize in Economics in 1994. What began as abstract mathematics evolved into a powerful interdisciplinary tool for analyzing strategic interactions across virtually all domains of human activity.

Breaking Down the Basics

Game theory revolves around a few straightforward ideas. First, there are players - anyone making strategic decisions, whether they're shoppers, CEOs, or presidents. Each player selects strategies, essentially their game plan, aiming for outcomes called payoffs, which could be profits, safety, or other benefits. The goal is usually to reach an equilibrium, a stable situation where nobody can gain an advantage by changing their decision alone. This concept is famously known as the Nash Equilibrium.

Games in game theory can be broadly divided into two types: cooperative games, where players can team up with binding agreements, and non-cooperative games, where each player acts independently, unable to form reliable partnerships.

The Prisoner's Dilemma: When Rationality Seems Irrational

Consider the classic scenario of two suspects, Alex and Blake. Both are arrested for a crime and questioned separately by the police. Each faces the same choice: remain silent (cooperate) or betray their partner (defect). The twist? The consequences depend on what the other chooses:

- If both stay silent, each gets 1 year in prison.

- If one betrays the other, the betrayer goes free, while the silent partner gets 3 years.

- If both betray each other, each gets 2 years.

What's fascinating here is the paradox it creates. Individually, betrayal always seems smarter - no matter what your partner does, betraying always gives you a better deal personally. But here's the catch: if both think like this, they'll both betray each other, ending up with two years each - a worse outcome than if they'd simply stayed silent.

This tension between individual benefit and collective good isn't limited to crime dramas. It appears everywhere: countries stuck in arms races, companies reluctant to cut pollution, or even individuals overusing shared resources like public parks or fisheries (known as the “tragedy of the commons”).

Zero-Sum Games: Pure Competition

Another fascinating type of game theory situation is the zero-sum game. Think of a poker game or a football match: one player's win is exactly another's loss. The total prize doesn't change - it's simply redistributed. A classic example is “Matching Pennies,” where two people each secretly choose heads or tails:

- If the choices match, Player 1 wins a dollar.

- If the choices differ, Player 2 wins a dollar.

Here, cooperation isn't possible, as any advantage gained by one directly harms the other. The best strategy? Be unpredictable. Each player randomly picks heads or tails with equal chances.

The matrix above shows the payoff for every possible outcome. Notice that every cell sums to zero: when Alice wins +$1, Bob loses -$1, and vice versa. There is no “win-win” here. Unlike the Prisoner's Dilemma, no amount of communication or trust can change this - the game is purely adversarial. The only optimal strategy is to be completely unpredictable (a 50/50 random mix), because any pattern your opponent detects becomes an exploitable weakness.

Real-world zero-sum scenarios include competitive sports, poker games, short-term stock trading, and elections with limited seats.

Coordination Games: When Teamwork is Everything

Unlike zero-sum games, coordination games are about working together, even if each person has slightly different preferences. Imagine Alice and Bob planning an evening out: Alice prefers the Opera, Bob prefers Football - but both would rather be together than alone. If they fail to coordinate, neither enjoys the night. Their challenge isn't competition; it's simply picking the same place.

The matrix shows two highlighted cells - Opera/Opera (3,2) and Football/Football (2,3) - where the numbers represent each player's happiness (Alice's score, Bob's score). At the Opera, Alice is happiest (3) and Bob is content (2); at Football it's reversed. Both cells are Nash equilibria, meaning neither player wants to switch once they're there. The off-diagonal cells are (0,0): if Alice goes to the Opera while Bob goes to Football (or vice versa), they end up alone - and being apart is what they both hate most, regardless of the venue. The challenge isn't selfishness (as in the Prisoner's Dilemma) or competition (as in zero-sum games) - it's simply agreeing on which equilibrium to land on.

Such coordination challenges are all around us: deciding whether to drive on the right or left side of the road, agreeing on meeting points and times, or even adopting technology standards like USB ports or smartphone chargers. These games usually have multiple good solutions, known as multiple Nash equilibria, but players must find a way to coordinate effectively - often relying on cultural or social cues, called focal points.

Another intriguing example is the “Stag Hunt,” where hunters must choose between chasing a large stag together (high reward but risky) or individually hunting smaller rabbits (safer but lower reward). The ideal outcome requires trust and coordination, highlighting how critical these are in achieving shared goals.

Repeated Games: When Actions Have Future Consequences

In real life, we rarely interact with others just once. The dynamic changes dramatically when games are played repeatedly. In what's known as iterated games, strategies like “tit-for-tat” emerge - where you start by cooperating and then mirror whatever your opponent did last time.

In 1984, political scientist Robert Axelrod invited academics to submit computer programs that would compete in a repeated Prisoner's Dilemma tournament. Tit-for-tat, the shortest and simplest program submitted, won. Why does it work so well? Three reasons. It's nice - it never picks a fight, so it builds trust quickly with anyone willing to cooperate. It's tough - if you betray it, it hits back immediately, so bullies learn fast that cheating doesn't pay. And it's forgiving - one retaliation is enough. The moment you go back to cooperating, so does it. No grudges, no spirals of revenge. This combination means tit-for-tat gets along with friendly players, stands up to hostile ones, and never gets stuck in pointless feuds.

The illustration above walks through five rounds of tit-for-tat in action. In Round 1, you cooperate unconditionally - be nice. Rounds 2 and 5 show the mirroring rule: when the opponent cooperates, you cooperate back. Round 3 is the critical moment - the opponent defects, and you immediately retaliate with your own defection. But in Round 4, the moment they return to cooperation, you forgive instantly and cooperate again. Three simple rules, no memory beyond the last move, and yet this strategy consistently outperforms far more complex ones. The chart below proves it:

Based on Axelrod's ecological simulation. Always Defect (red) surges early to 43% by exploiting cooperators - but once Always Cooperate collapses, defectors have no easy prey left. Tit-for-Tat (purple) rises from 25% to 68%, thriving through mutual cooperation while punishing defection.

Cooperate first, then copy whatever the opponent did last round.

Cooperate every round no matter what. Easily exploited.

Defect every round. Wins short-term, collapses when victims disappear.

Flip a coin each round. No strategy, steady background noise.

The chart above is an evolutionary simulation inspired by Axelrod's ecological tournament. Here's how it works: imagine a population where four types of “animals” coexist - each using a different strategy. Every generation, they all play the Prisoner's Dilemma against each other. Strategies that score more total points “reproduce” - their share of the population grows. Strategies that score poorly shrink. It's survival of the fittest, applied to strategies instead of species.

“Always Defect” (red) surges early because there are plenty of cooperators to take advantage of. But it's a short-lived boom - once the easy targets are gone, defectors are stuck playing against other defectors, and everyone loses. Meanwhile, Tit-for-Tat (purple) quietly builds alliances with friendly strategies and refuses to be pushed around by hostile ones. As the defectors run out of victims and collapse, Tit-for-Tat's steady partnerships carry it from 25% to 68% of the population.

Repeated interactions are the foundation of trust in business relationships, international diplomacy, and even personal relationships. When we know we'll face the same players tomorrow, revenge and reputation suddenly matter - often enough to overcome the temptation of short-term gains.

Asymmetric Information: When Knowledge is Power

What happens when players don't have equal information? Welcome to the world of asymmetric information games, where one party knows something the other doesn't. These situations create fascinating strategic dynamics that permeate markets, negotiations, and everyday interactions.

Consider buying a used car - the seller knows if it's a reliable vehicle or a “lemon” (slang for a defective car), but you don't. This information gap creates strategic challenges that shape markets, sometimes causing them to break down entirely. Economists call this “the market for lemons” problem, where buyers' inability to distinguish good products from bad can drive high-quality options out of the market entirely.

The illustration traces this death spiral in four stages. In a healthy market (Stage 1), good cars and lemons coexist. But because buyers can't inspect quality upfront (Stage 2), they offer an average price - too low for good cars, too high for lemons. Rational sellers of good cars withdraw (Stage 3), tilting the mix toward lemons, which pushes the average price even lower, driving out more good sellers, until only lemons remain (Stage 4). The bottom half shows how we fight back: sellers can signal quality (warranties, degrees), buyers can screen (inspections, interviews), or institutions can design rules that make honesty the dominant strategy (auction formats, insurance deductibles).

Job interviews, insurance markets, and auctions all operate in the shadow of asymmetric information. Warranties serve as costly signals of quality, screening processes help sort counterparties, and carefully designed auction formats create incentives for honest revelation of private information - all solutions informed by game theory principles.

Fascinating Real-World Applications

Game theory has transformed how we understand human behavior across countless domains. Perhaps most dramatically, it has shaped international politics and security doctrine. During the Cold War, the frightening concept of Mutually Assured Destruction (MAD) helped maintain an uneasy peace between nuclear powers. This strategy, where launching nuclear weapons would assure the attacker's own destruction, created a Nash equilibrium where neither side had incentive to strike first.

In the business world, game theory informs countless strategic decisions. Airlines continuously adjust ticket prices based on competitors' moves. Marketing departments find themselves trapped in advertising “arms races” where they'd collectively benefit from spending less, but can't risk unilateral disarmament without losing market share.

Even simple games like Rock-Paper-Scissors have deep mathematical insights - optimal strategy involves randomizing your choices perfectly. Since humans aren't truly random, skilled players exploit subtle patterns. And in traffic, adding new roads sometimes worsens congestion - a surprising insight known as Braess's Paradox.

Behavioral Game Theory: When Players Aren't Perfectly Rational

Traditional game theory assumes players are perfectly rational, but humans rarely are. Behavioral game theory bridges this gap by incorporating psychological realities into strategic analysis. Experiments consistently show we care about fairness, sometimes punishing others even at personal cost. We have limited foresight, struggle with complex calculations, and exhibit predictable biases.

The famous “Ultimatum Game” perfectly illustrates this: one player proposes how to split money, and the second can accept or reject (in which case, both get nothing). Purely rational players would accept any positive amount, but real humans typically reject “unfair” offers below 30%, demonstrating how social norms influence strategic behavior.

The chart shows acceptance rates from hundreds of lab experiments across cultures. At a 10% offer, only about 10% of people accept - they'd rather walk away with nothing than reward unfairness. The dashed line marks the “fairness threshold” around 30% - not a calculated number, but the empirically observed tipping point where more than half of people start rejecting. Economic theory says this is irrational (free money is free money), but humans consistently choose to punish perceived unfairness, even at personal cost.

This willingness to reject “unfair” offers creates a social enforcement mechanism that shapes strategic behavior and promotes more equitable outcomes than pure self-interest would predict.

Mechanism Design: Engineering the Rules

Sometimes called “reverse game theory,” mechanism design asks: what rules should we create to achieve desired outcomes? It's essentially social engineering with mathematical precision.

The key insight: don't hope people will do the right thing - design the rules so that doing the selfish thing is the right thing. Take auctions: say a painting is worth $100 to you. In a normal auction you might bid $80, hoping to snag a deal. But in a second-price auction, the winner pays the second-highest bid, not their own. So if you bid your true value ($100) and the next person bids $70, you win but only pay $70. Bidding lower only risks losing to someone who bids $90 - honesty is literally the best strategy. Insurance deductibles mean you share the cost of a claim, so you naturally drive more carefully. Uber's surge pricing makes fares go up when cars are scarce, which pulls more drivers onto the road without anyone having to coordinate it.

Dating apps design algorithms to create stable matches. The FCC has raised billions through carefully designed spectrum auctions. Governments structure tax incentives to encourage desired behaviors. This Nobel Prize-winning field (Leonid Hurwicz, Eric Maskin, Roger Myerson, 2007) helps us design systems where even self-interested participants are naturally guided toward socially beneficial outcomes.

How Game Theory Shapes Artificial Intelligence

Today, game theory is deeply influencing Artificial Intelligence. AI researchers leverage its insights to build smarter, more strategic machines through methods like self-play reinforcement learning. Systems like AlphaZero learned games like chess by playing against themselves millions of times, steadily developing strategies and converging toward optimal decisions - a real-world example of Nash equilibrium in action.

AI also employs game theory through Generative Adversarial Networks (GANs), where two neural networks compete in a zero-sum game - one creating fakes, the other detecting them - until the generator becomes indistinguishable from reality.

But perhaps the most elegant bridge between game theory and AI is Shapley values. Developed by Lloyd Shapley in 1953 as part of cooperative game theory, Shapley values solve a deceptively simple question: when a group of players cooperate to create value, how do you fairly divide the credit?

Here's a simple example. Three people - A, B, and C - start a business together. Alone, A would earn 2, B would earn 3, and C would earn 1. But together they earn 12 - way more than 2+3+1, because they amplify each other. So how do you split the 12 fairly? You can't just pay each person what they'd earn alone (that only adds up to 6). Shapley's answer: imagine every possible order the team could have been built. Sometimes A joins first, sometimes last. Each time, measure how much the total went up when that person walked in. Average those contributions across all orderings - that's their fair share. The result always adds up to the full 12, freeloaders get nothing, and people who contribute equally get paid equally.

This concept has been brilliantly adapted for AI explainability through SHAP (SHAPley Additive exPlanations). In a machine learning model, each input feature is a “player” and the prediction is the “coalition value.” SHAP values tell you exactly how much each feature pushed the prediction up or down from the baseline - transforming opaque black-box models into transparent, accountable decision-making tools. It's game theory making AI trustworthy.

Looking forward, game theory's influence will likely grow, guiding AI development in safety, cooperation, and human-machine interactions. Human-AI cooperation, AI safety through equilibrium analysis, and multimodal adversarial training are all active frontiers where game theory provides the foundational framework.

Game Theory Is Closer Than You Think

Game theory isn't just abstract math - it's all around us. Every time you choose to cooperate, compete, or negotiate, you're playing a game. Recognizing these patterns helps explain why our world sometimes behaves irrationally - or brilliantly rational - depending on perspective.

From geopolitics to your daily commute, game theory quietly shapes your decisions and outcomes, providing powerful tools to navigate life strategically.

So next time you're deciding whether to trust, negotiate, or compete, remember - you're already in the game.

Isn't it time you played wisely?